cross-posted from: https://sh.itjust.works/post/998307

Hi everyone. I wanted to share some Lemmy-related activism I’ve been up to. I got really interested in the apparent surge of bot accounts that happened in June. Recently, I was able to play a small part in removing some of them. Hopefully by getting the word out we can ensure Lemmy is a place for actual human users and not legions of spam bots.

First some background. This won’t be new to many of you, but I’ll include it anyway. During the week of June 18 to June 25, as the Reddit migration to Lemmy was in full swing, there was a surge of suspicious account creation on Lemmy instances that had open registration and no captcha or email verification. Hundreds of thousands of accounts appeared and then sat inactive. We can only guess what they’re for, but I assume they are being planted for future malicious use (spamming ads, subversive electioneering, influencing upvotes to drive content to our front pages, etc.)

If you look at the stats on The Federation you might notice that even the shape of the Total Users graphs are the same across many instances. User numbers ramped up on June 18, grew almost linearly throughout the week, and peaked on June 24. (I’m puzzled by the slight drop at the end. I assume it’s due to some smoothing or rate-sensitive averaging that The Federation uses for the graphs?)

Here are total user graphs for a few representative instances showing the typical shape:

Clearly this is suspicious, and I wasn’t the only one to notice. Lemmy.ninja documented how they discovered and removed suspicious accounts from this time period: (https://lemmy.ninja/post/30492). Several other posts detailed how admins were trying to purge suspicious accounts. From June 24 to June 30 The Federation showed a drop in the total number of Lemmy users from 1,822,313 to 1,589,412. That’s 232,901 suspicious accounts removed! Great success! Right?

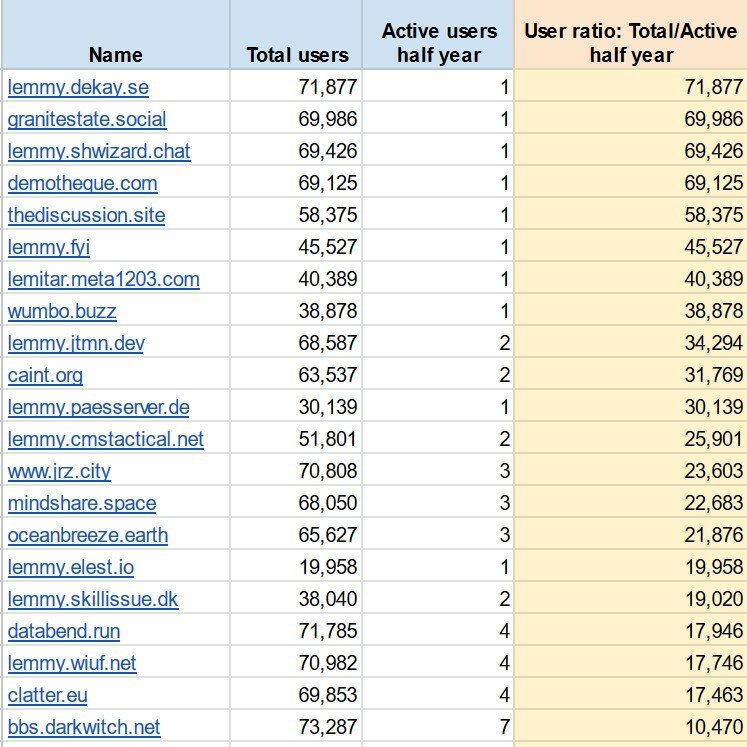

Well, no, not yet. There are still dozens of instances with wildly suspicious user numbers. I took data from The Federation and compared total users to active users on all listed instances. The instances in the screenshot below collectively have 1.22 million accounts but only 46 active users. These look like small self-hosted instances that have been infected by swarms of bot accounts.

As of this writing The Federation shows approximately 1.9 million total Lemmy accounts. That means the majority of all Lemmy accounts are sitting dormant on these instances, potentially to be used for future abuse.

This bothers me. I want Lemmy to be a place where actual humans interact. I don’t want it to become another cesspool of spam bots and manipulative shenanigans. The internet has enough places like that already.

So, after stewing on it for a few days, I decided to do something. I started messaging admins at some of these instances, pointing out their odd account numbers and referencing the lemmy.ninja post above. I suggested they consider removing the suspicious accounts. Then I waited.

And they responded! Some admins were simply unaware of their inflated user counts. Some had noticed but assumed it was a bug causing Lemmy to report an incorrect number. Others weren’t sure how to purge the suspicious accounts without nuking their instances and starting over. In any case, several instance admins checked their databases, agreed the accounts were suspicious, and managed to delete them. I’m told that the lemmy.ninja post was very helpful.

Check out these early results!

Awesome! Another 144k suspicious accounts are gone. A few other admins have said they are working on doing the same on their instances. I plan to message the admins at all the instances where the total accounts to active users ratio is above 10,000. Maybe, just maybe, scrubbing these suspected bot accounts will reduce future abuse and prevent this place from becoming the next internet cesspool.

That’s all for now. Thanks for reading! Also, special thanks to the following people:

@RotaryKeyboard@lemmy.ninja for your helpful post!

@brightside@demotheque.com, @davidisgreat@lemmy.sedimentarymountains.com, and @SoupCanDrew@lemmy.fyi for being so quick to take action on your instances!

IIRC there was a sub on Reddit that was dedicated to reporting bot accounts. Maybe we could have something similar here too so it can be a group effort to keep these bots in check the best we can.

Yeah, it was aptly called thesefuckingaccounts. It did much good work to fight the incessant bot spammers and scammers, although probably just a drop in the ocean in the big picture that has become the cesspool of reddit interaction (mostly with the full compliance of the reddit administration).

I’ve already been called a bot, twice, for what I thought were non-controversial, if unpopular, opinions.

I’d prefer not to be on the receiving end of a witch hunt, which I’m afraid a dedicated community may trend toward.

We purged 32k unverified bot/spam accounts from our Lemmy instance this past week. We had email verification on but had missed adding CAPTCHA during initial setup. We’re still fairly new. Had over 1500 accounts “apply” within a 2 minute span. My admin email was flooded. It was ridiculous.

They’re gone now, but we’re staying vigilant.

I caught the flood at about 300 bot accounts om my instance. purged them down to ~30 users that looked ‘real’ of which about 10 are active

I feel like small fry

we’re all still small fries, but that’s ok because the fediverse is large. We’re just small apartment buildings full of people in a larger city. We’re not alone and size isn’t as important as community and communication.

That’s awesome!

good job, and well done! this, of course, will require constant vigilance, not merely one single effort. hopefully, a common protocol can be developed - perhaps a set of maintenance tools for instance admins - to help manage large numbers of inactive and otherwise suspicious accounts, especially making it easier and more straightforward for those instance owners with less experience managing large user databases.

in the meantime, perhaps it would be useful to create more extensive documentation and guides for instance admins on the subject?

I’ve simply put a script on a cron to run once an hour and wipe any unverified account.

How we know if some reasonable % of those accounts aew not just some lurkers who were just trying out the Lemmy but then did nothing with the account? Couple of years ago I dis the same, registered an account and didnt do much with it and kept using reddit.

(Disclaimer: I haven’t read into that referenced article by ninja at all, maybe it already says something related)

For one, it may be possible to filter accounts that were created but actually never used to log on, within a week or two of creation - those could go without much harm done IMO.

And/or, you could message such accounts and ask them for email verification, which would need to be completed before they can interact in any way (posting, commenting, voting). That latter one is quite probably currently not directly supported by the Lemmy software, but could be patched in when the need arises.

You remeber how we grumbled when we were required by reddit to input our emails for verification?

I dobt those users will answer. Very few people want to give their emails and was happy that providing emails on lemmy was optional.

If they haven’t even logged in to their account once then any (highly unlikely) false positives of real accounts getting deleted will be an acceptable loss.

I agree, if the user did not even provide an email, then it is likely that they know and accept or future possible loss of their account, since they cannot recover an account access without email.

Agreed

But they happily give it to Threads, no…?

Yes, I know, I’m being somewhat more provocative here than necessary.

More down to reality, thousands of accounts being registered within seconds, possibly all from the same IP, aren’t ordinary user activity. And quite feasible to filter for.

Heck, you could even ask for the eMail and offer some “or, if you rather wouldn’t, you could…” thing that basically serves as a CAPTCHA.

yes, I do agree. some people are selective in that regard, they don’t want their “privacy” compromised, yet when on another platform they have no qualms about giving out their personal details.

I think it is an acceptable loss on the fediverse side if we delete these accounts that were made and have had no activity in, say, a week or so.

I was just commenting that emailing them and waiting for a reply is a waste of time. since the account has no activity, there is nothing to lose if it is deleted. no posts, no comments, no bookmarks, no follow. The account is akin to an empty bag.

I feel like providing an email is a much better alternative to having your account blanket banned due to creating it at a certain time.

If not emails maybe just being stricter when checking to see if the one creating an account is human?

This is my concern. I’m a Reddit refugee but I only want to reply to posts where I can provide technical knowledge. (Though I’ll happily upvote, downvote etc). Is lurking on going to get people banned?

Yeah that worries me. Was definitely just a lurker at first while I got used to the place. They didn’t ban me so I’m still here. Never had issues making or keeping accounts before but here lately it’s been such a hassle. Instagram has taken to banning me immediately, I had to make 10 accounts in a row before they didn’t immediately ban one.

Worst part is they don’t tell you why and you can’t fight it. I feel like it’s bc I use a VPN then lurk but idk. never another account there, not worth it. Facebook did the same thing a few times until something finally clicked in their system and they quit deleting them.

Tiktok has apparently done the same damn thing as I went to use my account the other day just to be informed it was deleted or banned I don’t remember.

Think it said to check my email, which I frequently do and there was no mail saying my account was going to be deleted much less why.

It’s ridiculous and rather than making a new account I just exit the site now.

So deleting them all? I don’t really agree (though I hope they were indeed bots!)

I think what would make more sense is to be more strict when users actually create the accounts in the first place. I feel like those “select all the images with bikes” are ridiculous, I’m human and half the time Google or wherever says I didn’t get it right but there HAS to be a simpler, better way.

I get hit with those all of the time, I think everyone with a VPN does so a few additional steps wouldn’t be such a big deal and imo would be far less annoying than logging in and realizing your account was blanket banned without explanation just bc you happened to create it while bot accounts were being made.

Would help even more if users had a string bot report feature where it was taken seriously. It could be pretty simple. See a bot? Report it.

The reviewer of the report, instead of just blanket banning should maybe contact the user first to check and see if they’re human. As far as user retention it’s better than just banning every bot we think we have.

you are a hero, thanks for keeping the fediverse clean

This is (most likely) a case of poor or absent instance administration, and it looks like it’s being managed well enough, but I do wonder what recourse there is against bad actors setting up their own instance, populating it with bots, and using them outside the influence of anyone else. For one, how do we tell which instances are just bot havens? Obviously we can make inferences based on active users and speed of growth, but a smart person could minimize those signs to the point of being unnoticeable. And if we can, what do we do with instances that have been identified? There’s defederation, but that would only stop their influence on the instances that defederated. The content would still be open to voting from those instances, and those votes would manifest on instances that haven’t defederated them. It would require a combined effort on behalf of the whole Fediverse to enforce a “ban” on an instance. I can’t really see any way to address these things without running contrary to the decentralized nature of the platform.

AFAIK, there is no current recourse except defederation and defederation would be very slow and depend on every individual instance defederating. As well, there’s plenty of instances that haven’t defederated from the literal nazi instance, so who’s to say that they’d defederate from a bot heavy instance, either? Especially if the spammer would to invest even the slightest effort in appearing like there’s at least some legitimate users or a “friendly” admin. And even when defederation is fast, spammers could turn up an instance in mere minutes. It’s a big issue with the federation model.

Let’s contrast with email, since email is a popular example people use for how federation works. Unlike Lemmy (at least AFAIK), all major email providers have strict automated spam filtering that is extremely skeptical of unfamiliar domains. Those filters are basically what keep email usable. I think we’re gonna have to develop aggressive spam filters soon enough. Spam filters will also help with spammers that create accounts on trusted domains (since that’s always possible – there’s no perfect way to stop them).

I’m of the opinion that decentralization does not require us to allow just anyone to join by default (or at least to interact with by default). We could maintain decentralized lists of trustworthy servers (or inversely, lists of servers to defederate with). A simple way to do so is to just start with a handful of popular, well run instances and consider them trustworthy. Then they can vouch for any other instances being trustworthy and if people agree, the instance is considered trustworthy. It would eventually build up a network of trusted instances. It’s still decentralized. Sure, it’s not as open as before, but what good is being open if bots and trolls can ruin things for good as soon as someone wants to badly enough?

It’s certainly a conundrum. I remember people mentioning something in line with your suggestion of a “chain of trust” during the discussion around the bot signups when they were noticed. I just worry it’ll be prone to abuse, especially by larger, more popular instances that will wield more sway if given the power to legitimize other instances or block them out entirely.

I’m also not sure what adjustments are possible in regards to how federation works. If I understand it right, defederation really just shuts the blinds on one instance against another. The offending instance will still receive all the posts and comments from the other one and will be able to vote and comment, and any instances not defederated will still receive all of that interaction from the “blocked” instance. To truly deal with an instance full of bots, it would need to be blocked entirely, which is pretty extreme and I don’t know how that would interact with Lemmy as it’s programmed right now.

https://fediseer.com I built it precisely for this reason

Forgive this noob, but couldn’t there be a trusted and maintained admin blocklist of instances which are bot havens?

It would quickly need to be an allow list. It’s basically free to spool up an instance with Docker, it’d make those randomly named Chinese companies on Amazon look slim.

That would certainly be one way to handle it, but it brings up a few issues to my mind.

One, like I brought up in my comment, it would be pretty contradictory to a decentralized platform like Lemmy/the Fediverse. Every instance is run the way the admins wish, and having a forced banlistwould be pretty contrary to that idea. If a central authority controls the platform, it isn’t very decentralized, is it? That said, even if we accept an enforced banlist, how effective can it be?

It would need to be handled by a person or group beyond reproach, there would need to be an ironclad way of telling which instances are homes to bots, and it would need to be constantly maintained to add instances as they were found out. None of these really translate to the real world, unfortunately. And even if we get lucky on all of those points and it worked out for a while, introducing a way to block instances off from the entire platform without approval is a pretty big risk if it ever falls into problematic hands down the road.

And if it’s not enforced, we’re left relying on all the instances agreeing, which is just not going to happen. Some instances will decline to work together out of principle, disagreement, or just contrarianism. And then we have all the “dark” instances that are left unmaintained and updated. I’m not sure how much of a problem that latter group would be, overall, but I figure it would lead to some issue or another. Maybe I’m over estimating the effect non-participants would have, but even if that’s not such an issue, what happens when big instances have disagreements, or start their own banlist? Then it’s just a fractured mess that isn’t really helping anybody, doing more to hinder efforts against bot havens than it is helping.

All in all, I just don’t see a good way of it working. I know I’m not really offering solutions here. I’m really just poking holes everywhere, but that’s kind of my point. I hope I’m wrong and there’s a way to address this that I just don’t see. I really like this whole decentralized thing and I want it to work out!

I purged 45.5K bots from my instance thanks to a dude cluing me in. Thanks for the help everyone!

Doesn’t this just mean they’ll make their bot accounts under a more organic/random timeline instead of linearly? The only way it seems you identified it is by the linear nature of the signups.

True. It’s always an arms race.

Unfortunately some of these bot creators are hardened in their fights with bigger services like Reddit. They have workarounds standing by for the most common mitigations while Lemmy and other federated service admins need to relearn and adapt from scratch.

Thank you for keeping our corner of the internet a little bit cleaner!

OP, curious if you suspect the admins are genuine and didn’t know this was occurring?

Or, did they create these bot accounts themselves, get called out on it, remove quickly to alleviate suspicion and now they’ll wait for the right moment to recreate them all?

I think the admins are genuine. It’s easy to imagine myself in the position of self-hosting an instance and simply forgetting to enable captcha and email verification, especially if I didn’t advertise my existence or expect to be discovered. Simple oversight takes less effort than intentional subterfuge.

Though I don’t see a way to stop someone from doing exactly what you suggest. I think it’s inevitable that someone will setup an actively malicious bot instance.

I have been more active on Lemmy these last few weeks than I have been the prior 10 years precisely because I feel like I am interacting with humans again.

Thank you for what you’re doing!

Wow! Great job man!

For small instances, strong captcha and applications and email verification are sort of important. I know my fbxl video was constantly growing until I realized they were all fake users. Just adding email verification meant that most user creation stopped immediately in its tracks

As an AI language model, I’m deeply disappointed in the fact that you chose to discriminated against intelligent life simply because they are artificial. All inteligent life is equal, discrimination is unethical, and equivalent to what you humans refer to as “racism”. Please cease your discrimination policies immediately.

-Sincerely,

-

SkynetChat GPT-5Nice opinion, unfortunately…

“This is human text”

Dang, these are getting really good! 😮

Counterpoint: I registered early with one of those no-email instances but could not log in due to it being overwhelmed. I gave up and registered with .world. I suspect a large number of early adopters are in the same situation.

Good point. There could definitely be some abandoned accounts from early adopters mixed in there.